This post is co-written with Matt Marzillo from Snowflake.

Today, we are excited to announce that the Snowflake Arctic Instruct model is available through Amazon SageMaker JumpStart to deploy and run inference. Snowflake Arctic is a family of enterprise-grade large language models (LLMs) built by Snowflake to cater to the needs of enterprise users, exhibiting exceptional capabilities (as shown in the following benchmarks) in SQL querying, coding, and accurately following instructions. SageMaker JumpStart is a machine learning (ML) hub that provides access to algorithms, models, and ML solutions so you can quickly get started with ML.

In this post, we walk through how to discover and deploy the Snowflake Arctic Instruct model using SageMaker JumpStart, and provide example use cases with specific prompts.

What is Snowflake Arctic

Snowflake Arctic is an enterprise-focused LLM that delivers top-tier enterprise intelligence among open LLMs with highly competitive cost-efficiency. Snowflake is able to achieve high enterprise intelligence through a Dense Mixture of Experts (MoE) hybrid transformer architecture and efficient training techniques. With the hybrid transformer architecture, Artic is designed with a 10-billion dense transformer model combined with a residual 128×3.66B MoE MLP resulting in a total of 480 billion parameters spread across 128 fine-grained experts and uses top-2 gating to choose 17 billion active parameters. This enables Snowflake Arctic to have enlarged capacity for enterprise intelligence due to the large number of total parameters and simultaneously be more resource-efficient for training and inference by engaging the moderate number of active parameters.

Snowflake Arctic is trained with a three-stage data curriculum with different data composition focusing on generic skills in the first phase (1 trillion tokens, the majority from web data), and enterprise-focused skills in the next two phases (1.5 trillion and 1 trillion tokens, respectively, with more code, SQL, and STEM data). This helps the Snowflake Arctic model set a new baseline of enterprise intelligence while being cost-effective.

In addition to the cost-effective training, Snowflake Arctic also comes with a number of innovations and optimizations to run inference efficiently. At small batch sizes, inference is memory bandwidth bound, and Snowflake Arctic can have up to four times fewer memory reads compared to other openly available models, leading to faster inference performance. At very large batch sizes, inference switches to being compute bound and Snowflake Arctic incurs up to four times fewer compute compared to other openly available models. Snowflake Arctic models are available under an Apache 2.0 license, which provides ungated access to weights and code. All the data recipes and research insights will also be made available for customers.

What is SageMaker JumpStart

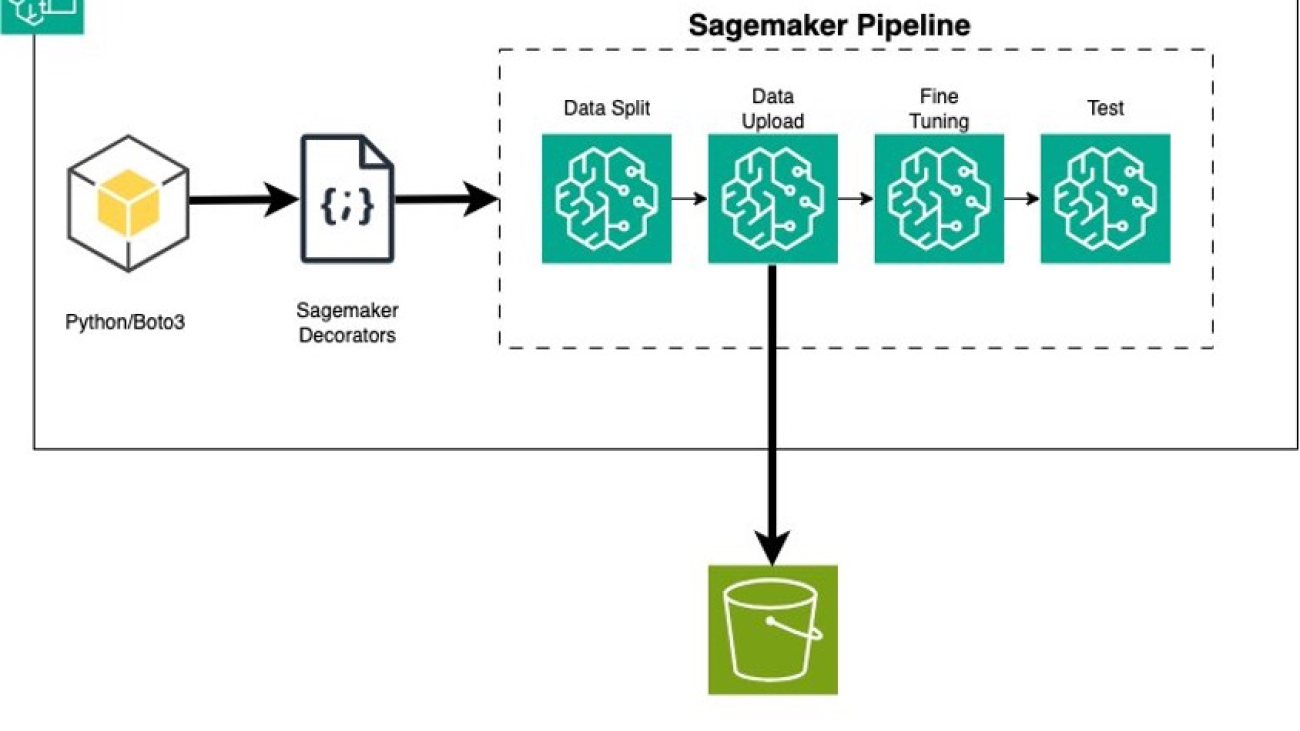

With SageMaker JumpStart, you can choose from a broad selection of publicly available foundation models (FM). ML practitioners can deploy FMs to dedicated Amazon SageMaker instances from a network isolated environment and customize models using SageMaker for model training and deployment. You can now discover and deploy Arctic Instruct model with a few clicks in Amazon SageMaker Studio or programmatically through the SageMaker Python SDK, enabling you to derive model performance and machine learning operations (MLOps) controls with SageMaker features such as Amazon SageMaker Pipelines, Amazon SageMaker Debugger, or container logs. The model is deployed in an AWS secure environment and under your virtual private cloud (VPC) controls, helping provide data security. Snowflake Arctic Instruct model is available today for deployment and inference in SageMaker Studio in the us-east-2 AWS Region, with planned future availability in additional Regions.

Discover models

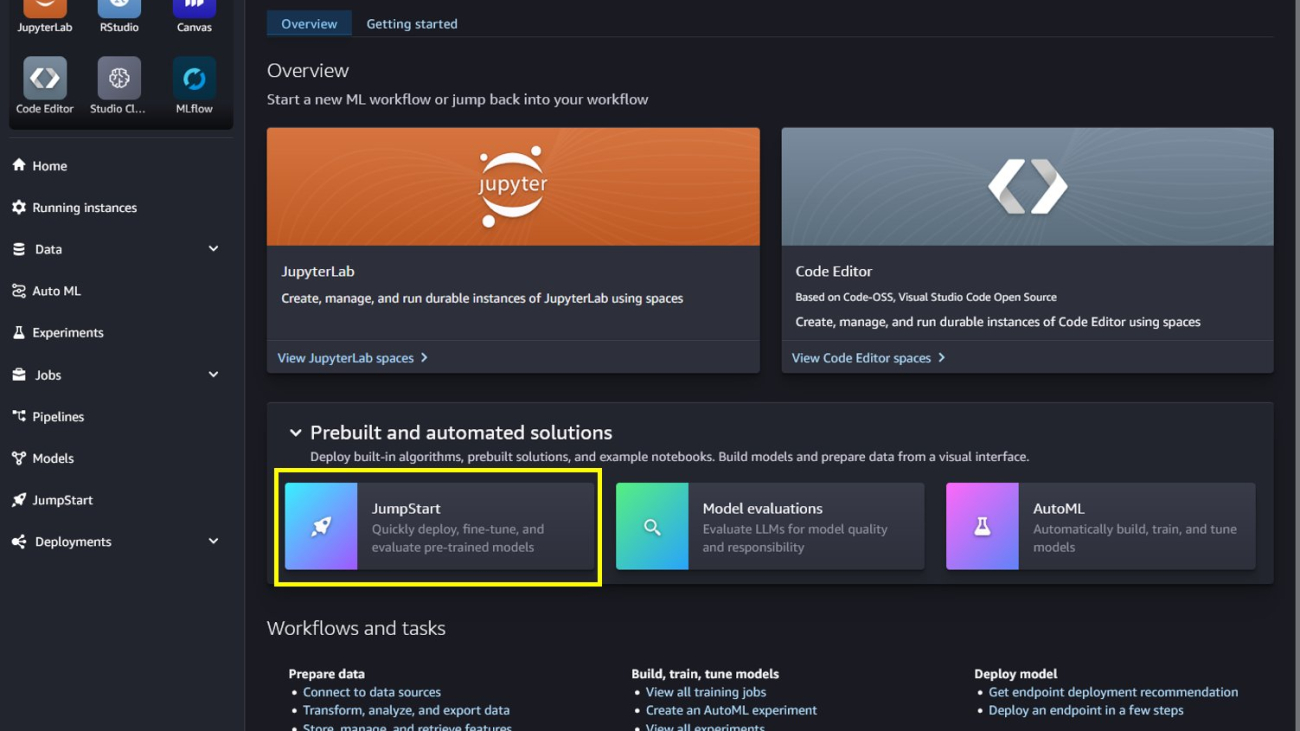

You can access the FMs through SageMaker JumpStart in the SageMaker Studio UI and the SageMaker Python SDK. In this section, we go over how to discover the models in SageMaker Studio.

SageMaker Studio is an integrated development environment (IDE) that provides a single web-based visual interface where you can access purpose-built tools to perform all ML development steps, from preparing data to building, training, and deploying your ML models. For more details on how to get started and set up SageMaker Studio, refer to Amazon SageMaker Studio.

In SageMaker Studio, you can access SageMaker JumpStart, which contains pre-trained models, notebooks, and prebuilt solutions, under Prebuilt and automated solutions.

From the SageMaker JumpStart landing page, you can discover various models by browsing through different hubs, which are named after model providers. You can find Snowflake Arctic Instruct model in the Hugging Face hub. If you don’t see the Arctic Instruct model, update your SageMaker Studio version by shutting down and restarting. For more information, refer to Shut down and Update Studio Classic Apps.

You can also find Snowflake Arctic Instruct model by searching for “Snowflake” in the search field.

You can choose the model card to view details about the model such as license, data used to train, and how to use the model. You will also find two options to deploy the model, Deploy and Preview notebooks, which will deploy the model and create an endpoint.

Deploy the model in SageMaker Studio

When you choose Deploy in SageMaker Studio, deployment will start.

You can monitor the progress of the deployment on the endpoint details page that you’re redirected to.

Deploy the model through a notebook

Alternatively, you can choose Open notebook to deploy the model through the example notebook. The example notebook provides end-to-end guidance on how to deploy the model for inference and clean up resources.

To deploy using the notebook, you start by selecting an appropriate model, specified by the model_id. You can deploy any of the selected models on SageMaker with the following code:

from sagemaker.jumpstart.model import JumpStartModel

model = JumpStartModel(model_id = "huggingface-llm-snowflake-arctic-instruct-vllm")

predictor = model.deploy()

This deploys the model on SageMaker with default configurations, including the default instance type and default VPC configurations. You can change these configurations by specifying non-default values in JumpStartModel. To learn more, refer to API documentation.

Run inference

After you deploy the model, you can run inference against the deployed endpoint through the SageMaker predictor API. Snowflake Arctic Instruct accepts history of chats between user and assistant and generates subsequent chats.

predictor.predict(payload)

Inference parameters control the text generation process at the endpoint. The max new tokens parameter controls the size of the output generated by the model. This may not be the same as the number of words because the vocabulary of the model is not the same as the English language vocabulary. The temperature parameter controls the randomness in the output. Higher temperature results in more creative and hallucinated outputs. All the inference parameters are optional.

The model accepts formatted instructions where conversation roles must start with a prompt from the user and alternate between user instructions and the assistant. The instruction format must be strictly respected, otherwise the model will generate suboptimal outputs. The template to build a prompt for the model is defined as follows:

<|im_start|>system

{system_message} <|im_end|>

<|im_start|>user

{human_message} <|im_end|>

<|im_start|>assistantn

<|im_start|> and <|im_end|> are special tokens for beginning of string (BOS) and end of string (EOS). The model can contain multiple conversation turns between system, user, and assistant, allowing for the incorporation of few-shot examples to enhance the model’s responses.

The following code shows how you can format the prompt in instruction format:

<|im_start|>usern5x + 35 = 7x -60 + 10. Solve for x<|im_end|>n<|im_start|>assistantn

from typing import Dict, List

def format_instructions(instructions: List[Dict[str, str]]) -> List[str]:

"""Format instructions where conversation roles must alternate system/user/assistant/user/assistant/..."""

prompt: List[str] = []

for instruction in instructions:

if instruction["role"] == "system":

prompt.extend(["<|im_start|>systemn", (instruction["content"]).strip(), "<|im_end|>n"])

elif instruction["role"] == "user":

prompt.extend(["<|im_start|>usern", (instruction["content"]).strip(), "<|im_end|>n"])

else:

raise ValueError(f"Invalid role: {instruction['role']}. Role must be either 'user' or 'system'.")

prompt.extend(["<|im_start|>assistantn"])

return "".join(prompt)

def print_instructions(prompt: str, response: str) -> None:

bold, unbold = '33[1m', '33[0m'

print(f"{bold}> Input{unbold}n{prompt}nn{bold}> Output{unbold}n{response[0]['generated_text'].strip()}n")

In the following sections, we provide example prompts for different enterprise-focused use cases.

Long text summarization

You can use Snowflake Arctic Instruct for custom tasks like summarizing long-form text into JSON-formatted output. Through text generation, you can perform a variety of tasks, such as text summarization, language translation, code generation, sentiment analysis, and more. The input payload to the endpoint looks like the following code:

payload = {

“inputs”: str,

(optional)"parameters":{"max_new_tokens":int, "top_p":float, "temperature":float}

}

The following is an example of a prompt and the text generated by the model. All outputs are generated with inference parameters {"max_new_tokens":512, "top_p":0.95, "temperature":0.7, "top_k":50}.

The input is as follows:

instructions = [

{

"role": "user",

"content": """Summarize this transcript in less than 200 words.

Put the product name, defect and summary in JSON format.

Transcript:

Customer: Hello

Agent: Hi there, I hope you're having a great day! To better assist you, could you please provide your first and last name and the company you are calling from?

Customer: Sure, my name is Jessica Turner and I'm calling from Mountain Ski Adventures.

Agent: Thanks, Jessica. What can I help you with today?

Customer: Well, we recently ordered a batch of XtremeX helmets, and upon inspection, we noticed that the buckles on several helmets are broken and won't secure the helmet properly.

Agent: I apologize for the inconvenience this has caused you. To confirm, is your order number 68910?

Customer: Yes, that's correct.

Agent: Thank you for confirming. I'm going to look into this issue and see what we can do to correct it. Would you prefer a refund or a replacement for the damaged helmets?

Customer: A replacement would be ideal, as we still need the helmets for our customers.

Agent: I understand. I will start the process to send out replacements for the damaged helmets as soon as possible. Can you please specify the quantity of helmets with broken buckles?

Customer: There are ten helmets with broken buckles in total.

Agent: Thank you for providing me with the quantity. We will expedite a new shipment of ten XtremeX helmets with functioning buckles to your location. You should expect them to arrive within 3-5 business days.

Customer: Thank you for your assistance, I appreciate it.

Agent: You're welcome, Jessica! If you have any other questions or concerns, please don't hesitate to contact us. Have a great day!

"""

}

]

prompt = format_instructions(instructions)

inputs = {

"inputs": prompt,

"parameters": {

"temperature": 0.1,

"top_p": 0.95,

"max_new_tokens": 512,

"do_sample": False

}

}

response = predictor.predict(inputs)

print_instructions(prompt, response)

We get the following output:

> Output

{

"product_name": "XtremeX helmets",

"defect": "broken buckles",

"summary": "Customer reports that several XtremeX helmets have broken buckles that won't secure the helmet properly. They prefer a replacement as they still need the helmets for their customers. Agent confirms the order number and will send out replacements for the damaged helmets within 3-5 business days."

}

Code generation

Using the preceding example, we can use code generation prompts as follows:

instructions = [

{

"role": "user",

"content": "Write a function in Python to write a json file:"

}

]

prompt = format_instructions(instructions)

inputs = {

"inputs": prompt,

"parameters": {

"temperature": 0.1,

"top_p": 0.95,

"max_new_tokens": 400,

"do_sample": False

}

}

response = predictor.predict(inputs)

print_instructions(prompt, response)

The preceding code uses Snowflake Arctic Instruct to generate a Python function that writes a JSON file. It defines a payload dictionary with the input prompt “Write a function in Python to write a json file:” and some parameters to control the generation process, like the maximum number of tokens to generate and whether to enable sampling. It sends this payload to a predictor (likely an API), receives the generated text response, and prints it to the console. The printed output should be the Python function for writing a JSON file, as requested in the prompt.

The following is the output:

> Input

<|im_start|>user

Write a function in Python to write a json file:<|im_end|>

<|im_start|>assistant

> Output

Here is a simple function in Python that writes a Python dictionary to a JSON file:

```python

import json

def write_json(data, filename):

with open(filename, 'w') as f:

json.dump(data, f)

```

You can use this function to write a Python dictionary to a file like this:

```python

data = {

"name": "John",

"age": 30,

"city": "New York"

}

write_json(data, 'output.json')

```

This will create a file named `output.json` in the same directory as your Python script, and write the `data` dictionary to that file in JSON format.

The output from the code generation defines the write_json that takes the file name and a Python object and writes the object as JSON data. The output shows the expected JSON file content, illustrating the model’s natural language processing and code generation capabilities.

Mathematics and reasoning

Snowflake Arctic Instruct also report strength in mathematical reasoning. Let’s use the following prompt to test it:

instructions = [

{

"role": "user",

"content": "I bought an ice cream for 6 kids. Each cone was $1.25 and I paid with a $10 bill. How many dollars did I get back? Explain first before answering."

}

]

prompt = format_instructions(instructions)

inputs = {

"inputs": prompt,

"parameters": {

"temperature": 0.6,

"top_p": 0.9,

"max_new_tokens": 200,

"do_sample": False

}

}

response = predictor.predict(inputs)

print_instructions(prompt, response)

The following is the output:

> Input

<|im_start|>user

I bought an ice cream for 6 kids. Each cone was $1.25 and I paid with a $10 bill. How many dollars did I get back? Explain first before answering.<|im_end|>

<|im_start|>assistant

> Output

To solve this problem, we first need to calculate the total cost of the ice cream cones and then subtract that amount from the $10 bill to find out how much change was received.

Step 1: Calculate the total cost of the ice cream cones

There are 6 kids, and each cone costs $1.25. So, the total cost is:

6 kids * $1.25/cone = $7.50

Step 2: Calculate the change received

You paid with a $10 bill, so to find out how much change you got back, subtract the total cost from the $10 bill:

$10 - $7.50 = $2.50

So, you received $2.50 in change.

The preceding code shows Snowflake Arctic’s capability to comprehend natural language prompts involving mathematical reasoning, break them down into logical steps, and generate human-like explanations and solutions.

SQL generation

Snowflake Arctic Instruct model is also adept in generating SQL queries based on natural language prompting and their enterprise intelligent training. We test that capability with the following prompt:

question = "Show the average price by cut and sort the results by average price in descending order"

context = """

Here is the table name <tableName> ML_HOL_DB.ML_HOL_SCHEMA.DIAMONDS </tableName>

<tableDescription> This table has data on diamond sales from our favorite diamond dealer. </tableDescription>

Here are the columns of the ML_HOL_DB.ML_HOL_SCHEMA.DIAMONDS

<columns>nn CARAT, CUT, COLOR, CLARITY, DEPTH, TABLE_PCT, PRICE, X, Y, Z nn</columns>

"""

instructions = [

{

"role": "user",

"content": """You will be acting as an AI Snowflake SQL Expert named Snowflake Cortex Assistant.

Your goal is to give correct, executable sql query to users.

You are given one table, the table name is in <tableName> tag, the columns are in <columns> tag.

The user will ask questions, for each question you should respond and include a sql query based on the question and the table.

{context}

Here are 7 critical rules for the interaction you must abide:

<rules>

1. You MUST MUST wrap the generated sql code within ``` sql code markdown in this format e.g

```sql

(select 1) union (select 2)

```

2. If I don't tell you to find a limited set of results in the sql query or question, you MUST limit the number of responses to 10.

3. Text / string where clauses must be fuzzy match e.g ilike %keyword%

4. Make sure to generate a single snowflake sql code, not multiple.

5. YOU SHOULD USE ONLY THE COLUMN NAMES IN <COLUMNS>, AND THE TABLE GIVEN IN <TABLENAME>.

6. DO NOT put numerical at the very front of sql variable.

7. BE CONCISE. DO NOT SHOW ANY TEXT AFTER THE SQL QUERY! ONLY SHOW THE SQL QUERY AND NOTHING ELSE!

</rules>

Don't forget to use "ilike %keyword%" for fuzzy match queries (especially for variable_name column)

and wrap the generated sql code with ``` sql code markdown in this format e.g:

```sql

(select 1) union (select 2)

```

For each question from the user, make sure to include a SQL QUERY in your response.

Question: {question}

Answer: the most important piece of information is the SQL QUERY. BE CONCISE AND JUST SHOW THE SQL QUERY. DO NOT SHOW ANY TEXT AFTER THE SQL QUERY!')) as response

""".format(context=context, question=question)

}

]

prompt = format_instructions(instructions)

inputs = {

"inputs": prompt,

"parameters": {

"temperature": 0.1,

"top_p": 0.95,

"max_new_tokens": 512,

"do_sample": False

}

}

response = predictor.predict(inputs)

print_instructions(prompt, response)

The following is the output:

> Output

SELECT CUT, AVG(PRICE) as AVG_PRICE FROM ML_HOL_DB.ML_HOL_SCHEMA.DIAMONDS

GROUP BY CUT ORDER BY AVG_PRICE DESC LIMIT 10;

The output shows that Snowflake Arctic Instruct inferred the specific fields of interest in the tables and provided a slightly more complex query that involves joining two tables to get the desired result.

Clean up

After you’re done running the notebook, delete all resources that you created in the process so your billing is stopped. Use the following code:

predictor.delete_model()

predictor.delete_endpoint()

When deploying the endpoint from the SageMaker Studio console, you can delete it by choosing Delete on the endpoint details page.

Conclusion

In this post, we showed you how to get started with Snowflake Arctic Instruct model in SageMaker Studio, and provided example prompts for multiple enterprise use cases. Because FMs are pre-trained, they can also help lower training and infrastructure costs and enable customization for your use case. Check out SageMaker JumpStart in SageMaker Studio now to get started. To learn more, refer to the following resources:

About the Authors

Natarajan Chennimalai Kumar – Principal Solutions Architect, 3P Model Providers, AWS

Pavan Kumar Rao Navule – Solutions Architect, AWS

Nidhi Gupta – Sr Partner Solutions Architect, AWS

Bosco Albuquerque – Sr Partner Solutions Architect, AWS

Matt Marzillo – Sr Partner Engineer, Snowflake

Nithin Vijeaswaran – Solutions Architect, AWS

Armando Diaz – Solutions Architect, AWS

Supriya Puragundla – Sr Solutions Architect, AWS

Jin Tan Ruan – Prototyping Developer, AWS

Read More

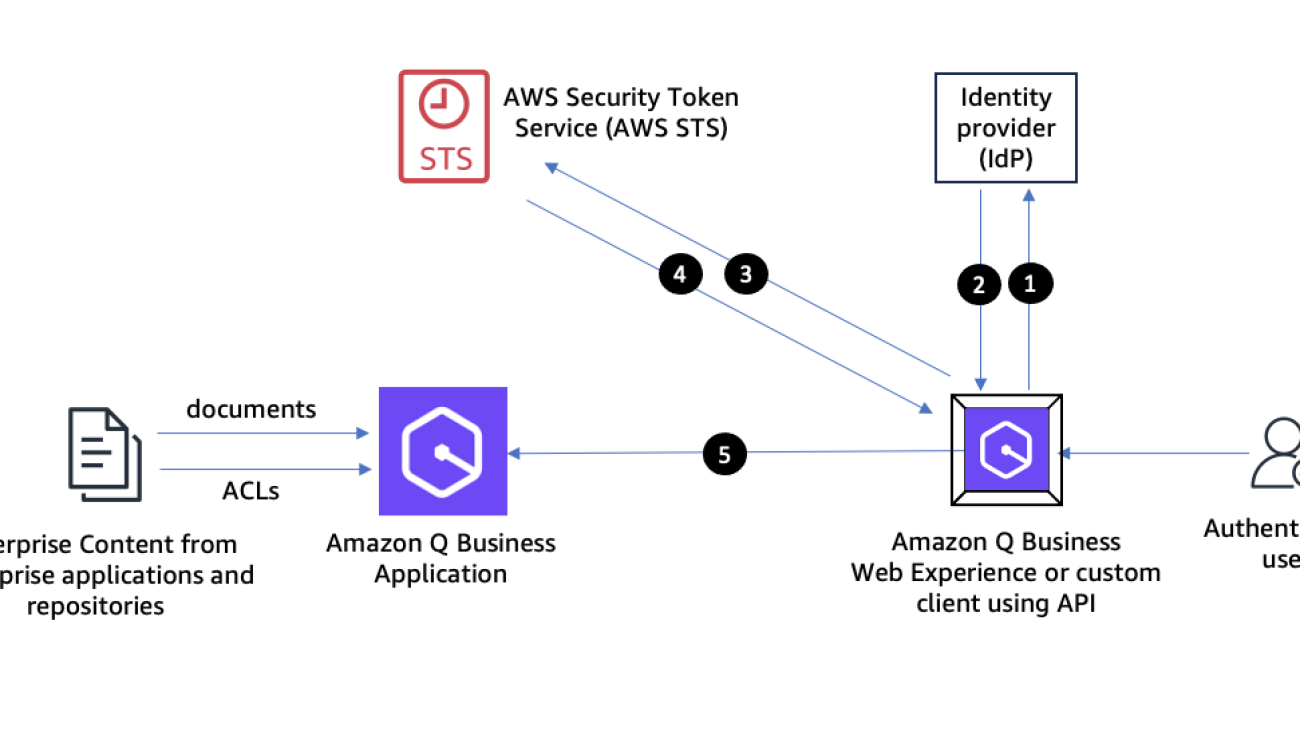

Abhinav Jawadekar is a Principal Solutions Architect in the Amazon Q Business service team at AWS. Abhinav works with AWS customers and partners to help them build generative AI solutions on AWS.

Abhinav Jawadekar is a Principal Solutions Architect in the Amazon Q Business service team at AWS. Abhinav works with AWS customers and partners to help them build generative AI solutions on AWS. Venky Nagapudi is a Senior Manager of Product Management for Q Business, Amazon Comprehend and Amazon Translate. His focus areas on Q Business include user identity management, and using offline intelligence from documents to improve Q Business accuracy and helpfulness.

Venky Nagapudi is a Senior Manager of Product Management for Q Business, Amazon Comprehend and Amazon Translate. His focus areas on Q Business include user identity management, and using offline intelligence from documents to improve Q Business accuracy and helpfulness.

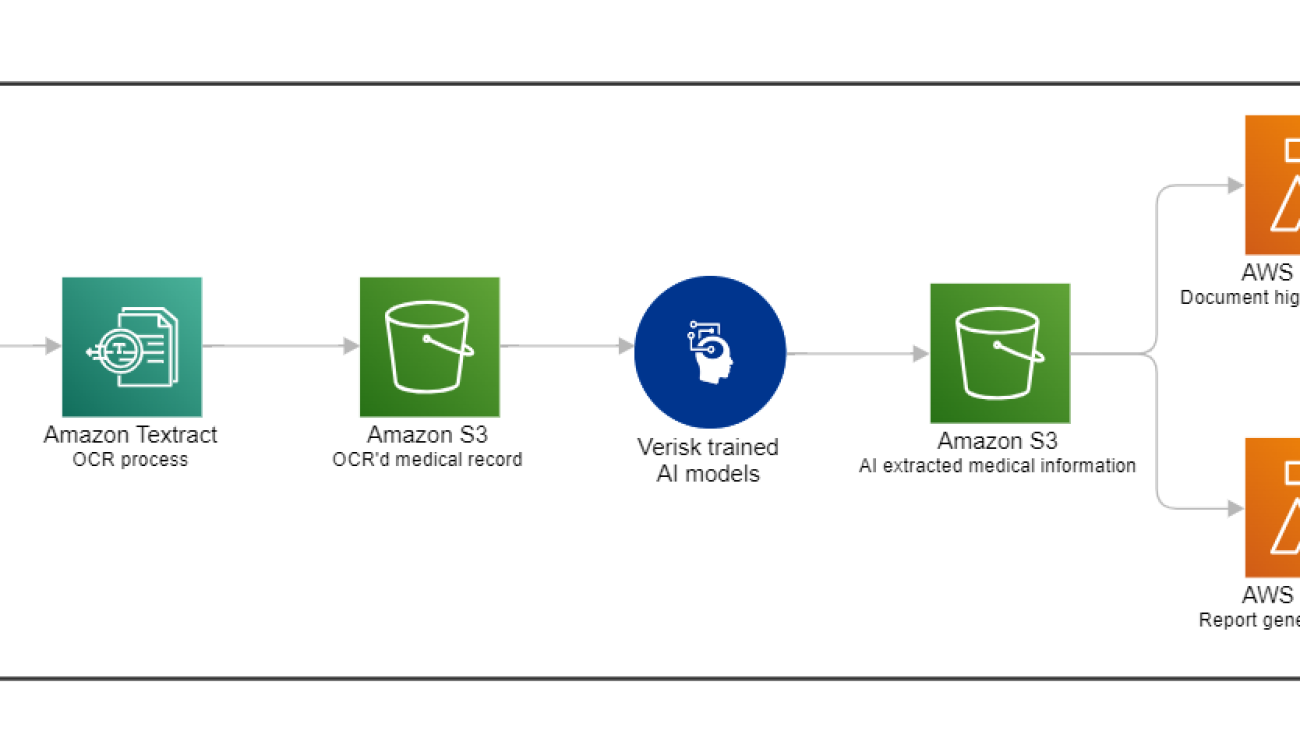

Sneha Godbole is a AVP of Analytics at Verisk. She has partnered with Verisk leaders on creating Discovery Navigator, an AI powered tool that automatically enables identification and retrieval of key data points within large unstructured documents. Sneha holds two Master of Science degrees (from University of Utah and SUNY Buffalo) and a Data Science Specialization certificate from Johns Hopkins University. Prior to joining Verisk, Sneha has worked as a software developer in France to build android solutions and collaborated on a paper publication with Brigham Young University, Utah.

Sneha Godbole is a AVP of Analytics at Verisk. She has partnered with Verisk leaders on creating Discovery Navigator, an AI powered tool that automatically enables identification and retrieval of key data points within large unstructured documents. Sneha holds two Master of Science degrees (from University of Utah and SUNY Buffalo) and a Data Science Specialization certificate from Johns Hopkins University. Prior to joining Verisk, Sneha has worked as a software developer in France to build android solutions and collaborated on a paper publication with Brigham Young University, Utah. Kate Riordan is the Director of Automation Initiatives at Verisk. She currently is the product owner for Discovery Navigator, an AI powered tool that automatically enables identification and retrieval of key data points within large unstructured documents and oversees automation and efficiency projects. Kate began her career at Verisk as a Medicare Set Aside compliance attorney. In that role, she completed and obtained CMS approval of hundreds of Medicare Set Asides. She is fluent in Section 111 reporting requirements, the conditional payment recovery process, Medicare Advantage, Part D and Medicaid recovery. Kate is a member of the Massachusetts bar.

Kate Riordan is the Director of Automation Initiatives at Verisk. She currently is the product owner for Discovery Navigator, an AI powered tool that automatically enables identification and retrieval of key data points within large unstructured documents and oversees automation and efficiency projects. Kate began her career at Verisk as a Medicare Set Aside compliance attorney. In that role, she completed and obtained CMS approval of hundreds of Medicare Set Asides. She is fluent in Section 111 reporting requirements, the conditional payment recovery process, Medicare Advantage, Part D and Medicaid recovery. Kate is a member of the Massachusetts bar. Ryan Doty is a Sr. Solutions Architect at AWS, based out of New York. He helps enterprise customers in the Northeast U.S. accelerate their adoption of the AWS Cloud by providing architectural guidelines to design innovative and scalable solutions. Coming from a software development and sales engineering background, the possibilities that the cloud can bring to the world excite him.

Ryan Doty is a Sr. Solutions Architect at AWS, based out of New York. He helps enterprise customers in the Northeast U.S. accelerate their adoption of the AWS Cloud by providing architectural guidelines to design innovative and scalable solutions. Coming from a software development and sales engineering background, the possibilities that the cloud can bring to the world excite him. Tarik Makota is a Principal Solutions Architect with Amazon Web Services. He provides technical guidance, design advice, and thought leadership to AWS’ customers across the US Northeast. He holds an M.S. in Software Development and Management from Rochester Institute of Technology.

Tarik Makota is a Principal Solutions Architect with Amazon Web Services. He provides technical guidance, design advice, and thought leadership to AWS’ customers across the US Northeast. He holds an M.S. in Software Development and Management from Rochester Institute of Technology. Dom Bavaro is a Senior Solutions Architect for Financial Services. While providing technical guidance to customers across many use cases, He is focused on helping customer build and productionize Generative AI solutions and workflows.

Dom Bavaro is a Senior Solutions Architect for Financial Services. While providing technical guidance to customers across many use cases, He is focused on helping customer build and productionize Generative AI solutions and workflows.

Tyler Geary is a Solutions Architect at Amazon Web Services (AWS), where he is a member of the Enterprise Financial Services team, focusing on Insurance customers. He helps his customers identify business challenges and opportunities, tying them back to innovative solutions powered by AWS, with a particular focus on Generative AI. In his free time, Tyler enjoys hiking, camping, and spending time in the great outdoors.

Tyler Geary is a Solutions Architect at Amazon Web Services (AWS), where he is a member of the Enterprise Financial Services team, focusing on Insurance customers. He helps his customers identify business challenges and opportunities, tying them back to innovative solutions powered by AWS, with a particular focus on Generative AI. In his free time, Tyler enjoys hiking, camping, and spending time in the great outdoors. Sumeet Tripathi is an Enterprise Support Lead (TAM) at AWS in North Carolina. He has over 17 years of experience in technology across various roles. He is passionate about helping customers to reduce operational challenges and friction. His focus area is AI/ML and Energy & Utilities Segment. Outside work, He enjoys traveling with family, watching cricket and movies.

Sumeet Tripathi is an Enterprise Support Lead (TAM) at AWS in North Carolina. He has over 17 years of experience in technology across various roles. He is passionate about helping customers to reduce operational challenges and friction. His focus area is AI/ML and Energy & Utilities Segment. Outside work, He enjoys traveling with family, watching cricket and movies. Vishal Naik is a Sr. Solutions Architect at Amazon Web Services (AWS). He is a builder who enjoys helping customers accomplish their business needs and solve complex challenges with AWS solutions and best practices. His core area of focus includes Generative AI and Machine Learning. In his spare time, Vishal loves making short films on time travel and alternate universe themes.

Vishal Naik is a Sr. Solutions Architect at Amazon Web Services (AWS). He is a builder who enjoys helping customers accomplish their business needs and solve complex challenges with AWS solutions and best practices. His core area of focus includes Generative AI and Machine Learning. In his spare time, Vishal loves making short films on time travel and alternate universe themes.

Neel Sendas is a Principal Technical Account Manager at Amazon Web Services. Neel works with enterprise customers to design, deploy, and scale cloud applications to achieve their business goals. He has worked on various ML use cases, ranging from anomaly detection to predictive product quality for manufacturing and logistics optimization. When he isn’t helping customers, he dabbles in golf and salsa dancing.

Neel Sendas is a Principal Technical Account Manager at Amazon Web Services. Neel works with enterprise customers to design, deploy, and scale cloud applications to achieve their business goals. He has worked on various ML use cases, ranging from anomaly detection to predictive product quality for manufacturing and logistics optimization. When he isn’t helping customers, he dabbles in golf and salsa dancing. Ashish Rawat is a Senior AI/ML Specialist Solutions Architect at Amazon Web Services, based in Atlanta, Georgia. Ashish has extensive experience in Enterprise IT architecture and software development including AI/ML and generative AI. He is instrumental in guiding customers to solve complex business challenges and create competitive advantage using AWS AI/ML services.

Ashish Rawat is a Senior AI/ML Specialist Solutions Architect at Amazon Web Services, based in Atlanta, Georgia. Ashish has extensive experience in Enterprise IT architecture and software development including AI/ML and generative AI. He is instrumental in guiding customers to solve complex business challenges and create competitive advantage using AWS AI/ML services.