Many languages spoken worldwide cover numerous regional varieties (sometimes called dialects), such as Brazilian and European Portuguese or Mainland and Taiwan Mandarin Chinese. Although such varieties are often mutually intelligible to their speakers, there are still important differences. For example, the Brazilian Portuguese word for “bus” is ônibus, while the European Portuguese word is autocarro. Yet, today’s machine translation (MT) systems typically do not allow users to specify which variety of a language to translate into. This may lead to confusion if the system outputs the “wrong” variety or mixes varieties in an unnatural way. Also, region-unaware MT systems tend to favor whichever variety has more data available online, which disproportionately affects speakers of under-resourced language varieties.

In “FRMT: A Benchmark for Few-Shot Region-Aware Machine Translation”, accepted for publication in Transactions of the Association for Computational Linguistics, we present an evaluation dataset used to measure MT systems’ ability to support regional varieties through a case study on Brazilian vs. European Portuguese and Mainland vs. Taiwan Mandarin Chinese. With the release of the FRMT data and accompanying evaluation code, we hope to inspire and enable the research community to discover new ways of creating MT systems that are applicable to the large number of regional language varieties spoken worldwide.

Challenge: Few-Shot Generalization

Most modern MT systems are trained on millions or billions of example translations, such as an English input sentence and its corresponding Portuguese translation. However, the vast majority of available training data doesn’t specify what regional variety the translation is in. In light of this data scarcity, we position FRMT as a benchmark for few-shot translation, measuring an MT model’s ability to translate into regional varieties when given no more than 100 labeled examples of each language variety. MT models need to use the linguistic patterns showcased in the small number of labeled examples (called “exemplars”) to identify similar patterns in their unlabeled training examples. In this way, models can generalize, producing correct translations of phenomena not explicitly shown in the exemplars.

|

| An illustration of a few-shot MT system translating the English sentence, “The bus arrived,” into two regional varieties of Portuguese: Brazilian (🇧🇷; left) and European (🇵🇹; right). |

Few-shot approaches to MT are attractive because they make it much easier to add support for additional regional varieties to an existing system. While our work is specific to regional varieties of two languages, we anticipate that methods that perform well will be readily applicable to other languages and regional varieties. In principle, those methods should also work for other language distinctions, such as formality and style.

Data Collection

The FRMT dataset consists of partial English Wikipedia articles, sourced from the Wiki40b dataset, that have been translated by paid, professional translators into different regional varieties of Portuguese and Mandarin. In order to highlight key region-aware translation challenges, we designed the dataset using three content buckets: (1) Lexical, (2) Entity, and (3) Random.

- The Lexical bucket focuses on regional differences in word choice, such as the “ônibus” vs. “autocarro” distinction when translating a sentence with the word “bus” into Brazilian vs. European Portuguese, respectively. We manually collected 20-30 terms that have regionally distinctive translations according to blogs and educational websites, and filtered and vetted the translations with feedback from volunteer native speakers from each region. Given the resulting list of English terms, we extracted texts of up to 100 sentences each from the associated English Wikipedia articles (e.g., bus). The same process was carried out independently for Mandarin.

- The Entity bucket is populated in a similar way and concerns people, locations or other entities strongly associated with one of the two regions in question for a given language. Consider an illustrative sentence like, “In Lisbon, I often took the bus.” In order to translate this correctly into Brazilian Portuguese, a model must overcome two potential pitfalls:

- The strong geographical association between Lisbon and Portugal might influence a model to generate a European Portuguese translation instead, e.g., by selecting “autocarro” rather than “ônibus“.

- Replacing “Lisbon” with “Brasília” might be a naive way for a model to localize its output toward Brazilian Portuguese, but would be semantically inaccurate, even in an otherwise fluent translation.

- The Random bucket is used to check that a model correctly handles other diverse phenomena, and consists of text from 100 randomly sampled articles from Wikipedia’s “featured” and “good” collections.

Evaluation Methodology

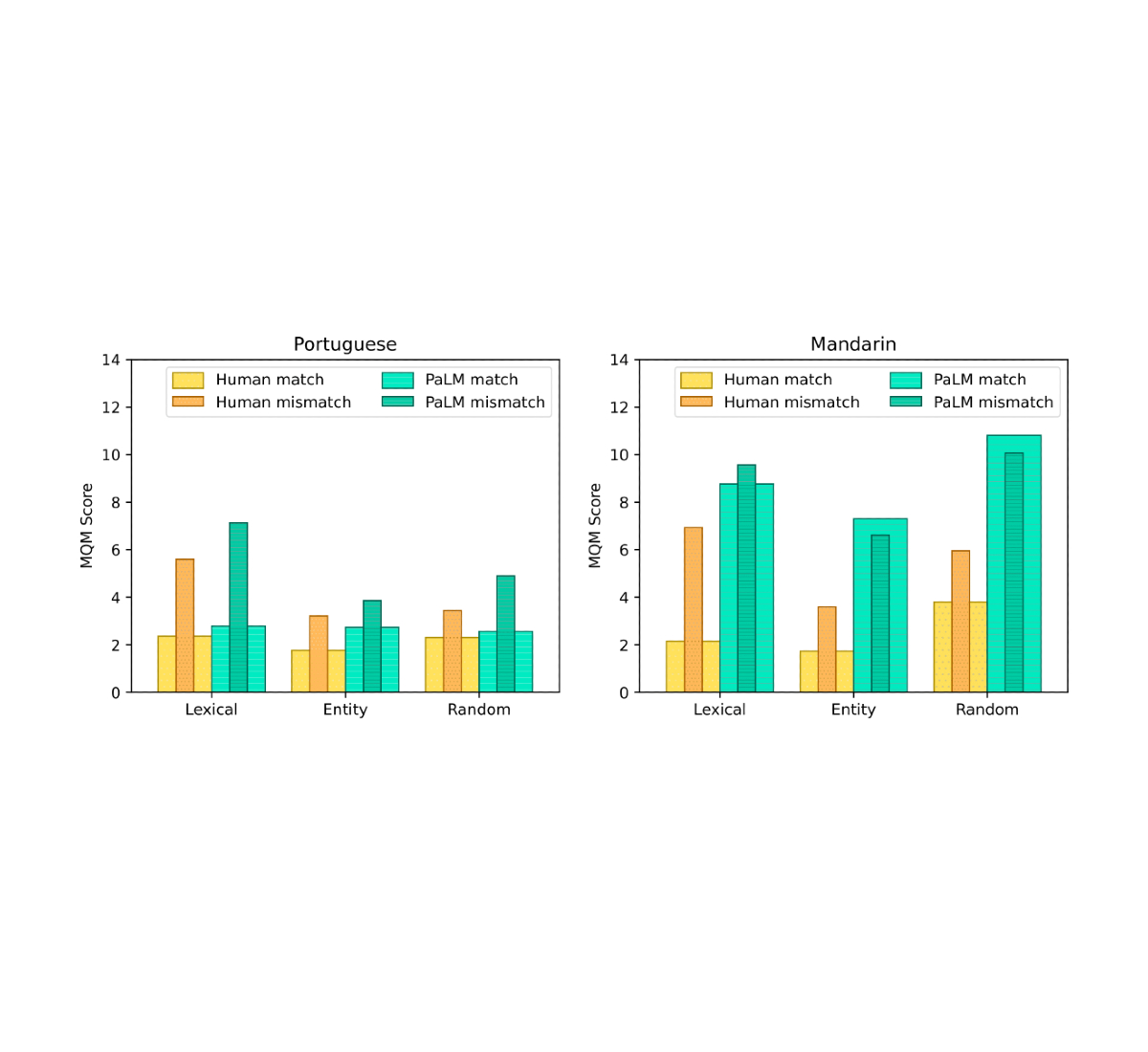

To verify that the translations collected for the FRMT dataset capture region-specific phenomena, we conducted a human evaluation of their quality. Expert annotators from each region used the Multi-dimensional Quality Metrics (MQM) framework to identify and categorize errors in the translations. The framework includes a category-wise weighting scheme to convert the identified errors into a single score that roughly represents the number of major errors per sentence; so a lower number indicates a better translation. For each region, we asked MQM raters to score both translations from their region and translations from their language’s other region. For example, Brazilian Portuguese raters scored both the Brazilian and European Portuguese translations. The difference between these two scores indicates the prevalence of linguistic phenomena that are acceptable in one variety but not the other. We found that in both Portuguese and Chinese, raters identified, on average, approximately two more major errors per sentence in the mismatched translations than in the matched ones. This indicates that our dataset truly does capture region-specific phenomena.

While human evaluation is the best way to be sure of model quality, it is often slow and expensive. We therefore wanted to find an existing automatic metric that researchers can use to evaluate their models on our benchmark, and considered chrF, BLEU, and BLEURT. Using the translations from a few baseline models that were also evaluated by our MQM raters, we discovered that BLEURT has the best correlation with human judgments, and that the strength of that correlation (0.65 Pearson correlation coefficient, ρ) is comparable to the inter-annotator consistency (0.70 intraclass correlation).

| Metric | Pearson’s ρ | ||

| chrF | 0.48 | ||

| BLEU | 0.58 | ||

| BLEURT | 0.65 |

| Correlation between different automatic metrics and human judgements of translation quality on a subset of FRMT. Values are between -1 and 1; higher is better. |

System Performance

Our evaluation covered a handful of recent models capable of few-shot control. Based on human evaluation with MQM, the baseline methods all showed some ability to localize their output for Portuguese, but for Mandarin, they mostly failed to use knowledge of the targeted region to produce superior Mainland or Taiwan translations.

Google’s recent language model, PaLM, was rated best overall among the baselines we evaluated. In order to produce region-targeted translations with PaLM, we feed an instructive prompt into the model and then generate text from it to fill in the blank (see the example shown below).

Translate the following texts from English to European Portuguese.

English: [English example 1].

European Portuguese: [correct translation 1].

...

English: [input].

European Portuguese: _____"

PaLM obtained strong results using a single example, and had marginal quality gains on Portuguese when increasing to ten examples. This performance is impressive when taking into consideration that PaLM was trained in an unsupervised way. Our results also suggest language models like PaLM may be particularly adept at memorizing region-specific word choices required for fluent translation. However, there is still a significant performance gap between PaLM and human performance. See our paper for more details.

|

|

| MQM performance across dataset buckets using human and PaLM translations. Thick bars represent the region-matched case, where raters from each region evaluate translations targeted at their own region. Thin, inset bars represent the region-mismatched case, where raters from each region evaluate translations targeted at the other region. Human translations exhibit regional phenomena in all cases. PaLM translations do so for all Portuguese buckets and the Mandarin lexical bucket only. |

Conclusion

In the near future, we hope to see a world where language generation systems, especially machine translation, can support all speaker communities. We want to meet users where they are, generating language fluent and appropriate for their locale or region. To that end, we have released the FRMT dataset and benchmark, enabling researchers to easily compare performance for region-aware MT models. Validated via our thorough human-evaluation studies, the language varieties in FRMT have significant differences that outputs from region-aware MT models should reflect. We are excited to see how researchers utilize this benchmark in development of new MT models that better support under-represented language varieties and all speaker communities, leading to improved equitability in natural-language technologies.

Acknowledgements

We gratefully acknowledge our paper co-authors for all their contributions to this project: Timothy Dozat, Xavier Garcia, Dan Garrette, Jason Riesa, Orhan Firat, and Noah Constant. For helpful discussion and comments on the paper, we thank Jacob Eisenstein, Noah Fiedel, Macduff Hughes and Mingfei Lau. For essential feedback around specific regional language differences, we thank Andre Araujo, Chung-Ching Chang, Andreia Cunha, Filipe Gonçalves, Nuno Guerreiro, Mandy Guo, Luis Miranda, Vitor Rodrigues and Linting Xue. For logistical support in collecting human translations and ratings, we thank the Google Translate team. We thank the professional translators and MQM raters for their role in producing the dataset. We also thank Tom Small for providing the animation in this post.