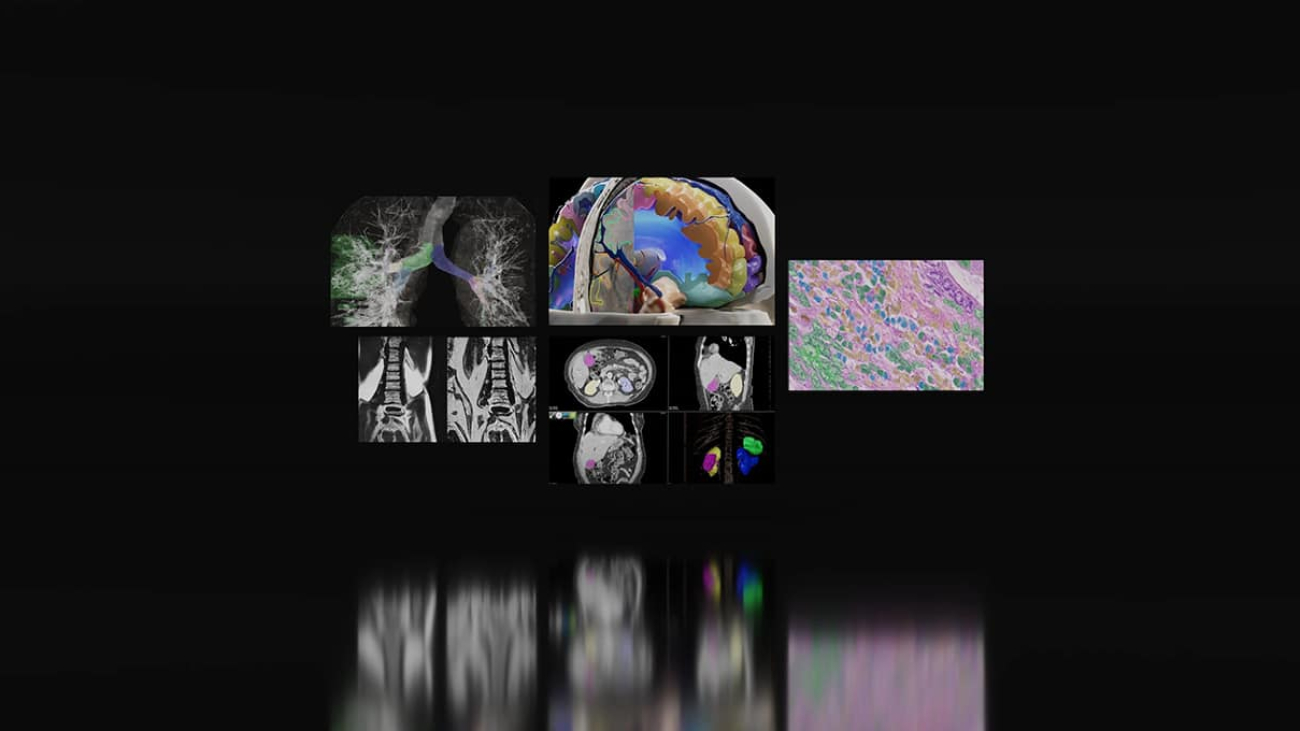

A committee of experts from top U.S. medical centers and research institutes is harnessing NVIDIA-powered federated learning to evaluate the impact of federated learning and AI-assisted annotation to train AI models for tumor segmentation.

Federated learning is a technique for developing more accurate, generalizable AI models trained on data across diverse data sources without mitigating data security or privacy. It allows several organizations to collaborate on the development of an AI model without sensitive data ever leaving their servers.

“Due to privacy and data management constraints, it’s growing more and more complicated to share data from site to site and aggregate it in one place — and imaging AI is developing faster than research institutes can set up data-sharing contracts,” said John Garrett, associate professor of radiology at the University of Wisconsin–Madison. “Adopting federated learning to build and test models at multiple sites at once is the only way, practically speaking, to keep up. It’s an indispensable tool.”

Garrett is part of the Society for Imaging Informatics and Medicine (SIIM) Machine Learning Tools and Research Subcommittee, a group of clinicians, researchers and engineers that aims to advance the development and application of AI for medical imaging. NVIDIA is a member of SIIM, and has been collaborating with the committee on federated learning projects since 2019.

“Federated learning techniques allow enhanced data privacy and security in compliance with privacy regulations like GDPR, HIPAA and others,” said committee chair Khaled Younis. “In addition, we see improved model accuracy and generalization.”

To support their latest project, the team — including collaborators from Case Western, Georgetown University, the Mayo Clinic, the University of California, San Diego, the University of Florida and Vanderbilt University — tapped NVIDIA FLARE (NVFlare), an open-source framework that includes robust security features, advanced privacy protection techniques and a flexible system architecture.

Through the NVIDIA Academic Grant Program, the committee received four NVIDIA RTX A5000 GPUs, which were distributed across participating research institutes to set up their workstations for federated learning. Additional collaborators used NVIDIA GPUs in the cloud and in on-premises servers, highlighting the flexibility of NVFLare.

Cracking the Code for Federated Learning

Each of six participating medical centers provided data from around 50 medical imaging studies for the project, focused on renal cell carcinoma, a kind of kidney cancer.

“The idea with federated learning is that during training we exchange the model rather than exchange the data,” said Yuankai Huo, assistant professor of computer science and director of the Biomedical Data Representation and Learning Lab at Vanderbilt University.

In a federated learning framework, an initial global model broadcasts model parameters to client servers. Each server uses those parameters to set up a local version of the model that’s trained on the organization’s proprietary data. Then, updated parameters from each of the local models are sent back to the global model, where they’re aggregated to produce a new global model. The cycle repeats until the model’s predictions no longer improve with each training round.

The group experimented with model architectures and hyperparameters to optimize for training speed, accuracy and the number of imaging studies required to train the model to the desired level of precision.

AI-Assisted Annotation With NVIDIA MONAI

In the first phase of the project, the training data used for the model was labeled manually. For the next phase, the team is using NVIDIA MONAI for AI-assisted annotation to evaluate how model performance differs with training data segmented with the help of AI compared to traditional annotation methods.

“The biggest struggle with federated learning activities is typically that the data at different sites is not tremendously uniform. People use different imaging equipment, have different protocols and just label their data differently,” said Garrett. “By training the federated learning model a second time with the addition of MONAI, we aim to find if that improves overall annotation accuracy.”

The team is using MONAI Label, an image-labeling tool that enables users to develop custom AI annotation apps, reducing the time and effort needed to create new datasets. Experts will validate and refine the AI-generated segmentations before they’re used for model training.

Data for both the manual and AI-assisted annotation phases is hosted on Flywheel, a leading medical imaging data and AI platform that has integrated NVIDIA MONAI into its offerings.

Once the project is complete, the team plans to publish their methodology, annotated datasets and pretrained model to support future work.

“We’re interested in not just exploring these tools,” Garrett said, “but also publishing our work so others can learn and use these tools throughout the medical field.”

Apply for an NVIDIA Academic Grant

The NVIDIA Academic Grant Program advances academic research by providing world-class computing access and resources to researchers. Applications are now open for full-time faculty members at accredited academic institutions who are using NVIDIA technology to accelerate projects in simulation and modeling, generative AI and large language models.

Future application cycles will focus on projects in data science, graphics and vision, and edge AI — including federated learning.

NVIDIA GeForce NOW (@NVIDIAGFN)

NVIDIA GeForce NOW (@NVIDIAGFN)