Sharing knowledge is essential to Google’s research philosophy — it accelerates technological progress and expands capabilities community-wide. Solving complex problems requires bringing together diverse minds and resources collaboratively. This can be accomplished through building local and global connections with multidisciplinary experts and impacted communities. In partnership with these stakeholders, we bring our technical leadership, product footprint, and resources to make progress against some of society’s greatest opportunities and challenges.

We at Google see it as our responsibility to disseminate our work as contributing members of the scientific community and to help train the next generation of researchers. To do this well, collaborating with experts and researchers outside of Google is essential. In fact, just over half of our scientific publications highlight work done jointly with authors outside of Google. We are grateful to work collaboratively across the globe and have only increased our efforts with the broader research community over the past year. In this post, we will talk about some of the opportunities afforded by such partnerships, including:

Addressing social challenges together

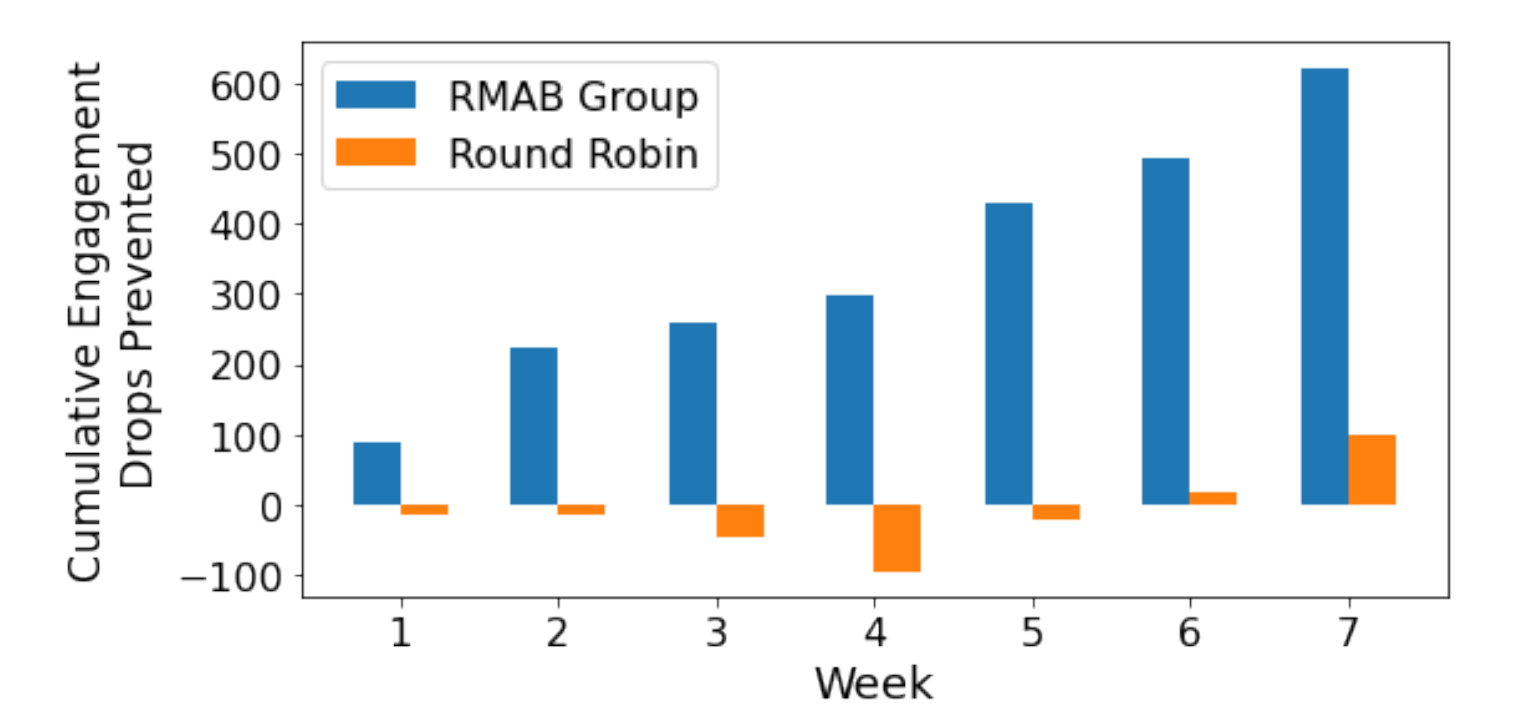

Engaging the wider community helps us progress on seemingly intractable problems. For example, access to timely, accurate health information is a significant challenge among women in rural and densely populated urban areas across India. To solve this challenge, ARMMAN developed mMitra, a free mobile service that sends preventive care information to expectant and new mothers. Adherence to such public health programs is a prevalent challenge, so researchers from Google Research and the Indian Institute of Technology, Madras worked with ARMMAN to design an ML system that alerts healthcare providers about participants at risk of dropping out of the health information program. This early identification helps ARMMAN provide better-targeted support, improving maternal health outcomes.

|

| Google Research worked with ARMMAN to design a system to alert healthcare providers about participants at risk for dropping out of their preventative care information program for expectant mothers. This plot shows the cumulative engagement drops prevented using our restless multi-armed bandit model (RMAB) compared to the control group (Round Robin). |

We also support Responsible AI projects directly for other organizations — including our commitment of $3M to fund the new INSAIT research center based in Bulgaria. Further, to help build a foundation of fairness, interpretability, privacy, and security, we are supporting the establishment of a first-of-its-kind multidisciplinary Center for Responsible AI with a grant of $1M to the Indian Institute of Technology, Madras.

Training the next generation of researchers

Part of our responsibility in guiding how technology affects society is to help train the next generation of researchers. For example, supporting equitable student persistence in computing research through our Computer Science Research Mentorship Program, where Googlers have mentored over one thousand students since 2018 — 86% of whom identify as part of a historically marginalized group.

We work towards inclusive goals and work across the globe to achieve them. In 2022, we expanded our research interactions and programs to faculty and students across Latin America, which included grants to women in computer science in Ecuador. We partnered with ENS, a university in France, to help fund scholarships for students to train through research. Another example is our collaboration with the Computing Alliance of Hispanic-Serving Institutions (CAHSI) to provide $4.8 million to support more than 30 collaborative research projects and over 3,000 Hispanic students and faculty across a network of Hispanic-serving institutions.

Efforts like these foster the research ecosystem and help the community give back. Through exploreCSR, we partner with universities to provide students with introductory experiences in research, such as Rice University’s regional workshop on applications and research in data science (ReWARDS), which was delivered in rural Peru by faculty from Rice. Similarly, one of our Awards for Inclusion Research led to a faculty member helping startups in Africa use AI.

The funding we provide is most often unrestricted and leads to inspiring results. Last year, for example, Kean University was one of 53 institutions to receive an exploreCSR award. It used the funding to create the Research Recruits Program, a two-semester program designed to give undergraduates an introductory opportunity to participate in research with a faculty mentor. A student at Kean with a chronic condition that requires him to take different medications every day, a struggle that affects so many, decided to pursue research on the topic with a peer. Their research, set to be published this year, demonstrates an ML solution, built with Google’s TensorFlow, that can identify pills with 99.8% certainty when used correctly. Results like these are why we continue to invest in younger generations, further demonstrated by our long-term commitment to funding PhD Fellows every year across the globe.

Building an inclusive ecosystem is imperative. To this end, we’ve also partnered with the non-profit Black in Robotics (BiR), formed to address the systemic inequities in the robotics community. Together, we established doctoral student awards that help financially support graduate students and to support BiR’s newly established Bay Area Robotics lab. We also help make global conferences accessible to more researchers around the world, for example, by funding 24 students this year to attend Deep Learning Indaba in Tunisia.

Collaborating to advance scientific innovations

In 2022 Google sponsored over 150 research conferences and even more workshops, which leads to invaluable engagements with the broader research community. At research conferences, Googlers serve on program committees and organize workshops, tutorials and numerous other activities to collectively advance the field. Additionally, last year, we hosted over 14 dedicated workshops to bring together researchers, such as the 2022 Quantum Symposium, which generates new ideas and directions for the research field, further advancing research initiatives. In 2022, we authored 2400 papers, many of which were presented at leading research conferences, such as NeurIPS, EMNLP, ECCV, Interspeech, ICML, CVPR, ICLR, and many others. More than 50% of these papers were authored in collaboration with researchers beyond Google.

Over the past year, we’ve expanded our engagement models to facilitate students, faculty, and Google’s research scientists coming together across schools to form constructive research triads. One such project, undertaken in partnership with faculty and students from Georgia Tech, aims to develop a robot guide dog with human behavior modeling and safe reinforcement learning. Throughout 2022, we gave over 224 grants to researchers and over $10M in Google Cloud Platform credits for topics ranging from the improvement of algorithms for post-quantum cryptography with collaborators at CNRS in France to fostering cybersecurity research at TU Munich and Fraunhofer AISEC in Germany.

In 2022, we made 22 new multi-year commitments totaling over ~$80M to 65 institutions across nine countries, where each year we will host workshops to select over 100 research projects of mutual interest. For example, in a growing partnership, we are supporting the new Max Planck VIA-Center in Germany to work together on robotics. Another large area of investment is a close partnership with four universities in Taiwan (NTU, NCKU, NYCU, NTHU) to increase innovation in silicon chip design and improve competitiveness in semiconductor design and manufacturing. We aim to collaborate by default and were proud to be recently named one of Australia’s top collaborating companies.

Fueling innovation in products and engineering

The community fuels innovation at Google. For example, by facilitating student researchers to work with us on defined research projects, we’ve experienced both incremental and more dramatic improvements. Together with visiting researchers, we combine information, compute power, and a great deal of expertise to bring about breakthroughs, such as leveraging our undersea internet cables to detect earthquakes. Visiting Researchers also worked hand-in-hand with us to develop Minerva, a state-of-the-art solution that came about by training a deep learning model on a dataset that contains quantitative reasoning with symbolic expressions.

|

| Minerva incorporates recent prompting and evaluation techniques to better solve mathematical questions. It then employs majority voting, in which it generates multiple solutions to each question and chooses the most common answer as the solution, thus improving performance significantly. |

Open-sourcing datasets and tools

Engaging with the broader research community is a core part of our efforts to build a more collaborative ecosystem. We support the general advancement of ML and related research through the release of open-source code and datasets. We continued to grow open source datasets in 2022, for example, in natural language processing and vision, and expanded our global index of available datasets in Google Dataset Search. We also continued to release sustainability data via Data Commons and invite others to use it for their research. See some of the datasets and tools we released in 2022 listed below.

| Dataset | Description |

| Auto-Arborist | A multiview urban tree classification dataset that consists of ~2.6M trees covering >320 genera, which can aid in the development of models for urban forest monitoring. |

| Bazel GitHub Metrics | A dataset with GitHub download counts of release artifacts from selected bazelbuild repositories. |

| BC-Z demonstration | Episodes of a robotic arm performing 100 different manipulation tasks. Data for each episode includes the RGB video, the robot’s end-effector positions, and the natural language embedding. |

| BEGIN V2 | A benchmark dataset for evaluating dialog systems and natural language generation metrics. |

| CLSE: Corpus of Linguistically Significant Entities | A dataset of named entities annotated by linguistic experts. It includes 34 languages and 74 different semantic types to support various applications from airline ticketing to video games. |

| CocoChorales | A dataset consisting of over 1,400 hours of audio mixtures containing four-part chorales performed by 13 instruments, all synthesized with realistic-sounding generative models. |

| Crossmodal-3600 | A geographically diverse dataset of 3,600 images, each annotated with human-generated reference captions in 36 languages. |

| CVSS: A Massively Multilingual Speech-to-Speech Translation Corpus | A Common Voice-based Speech-to-Speech translation corpus that includes 2,657 hours of speech-to-speech translation sentence pairs from 21 languages into English. |

| DSTC11 Challenge Task | This challenge evaluates task-oriented dialog systems end-to-end, from users’ spoken utterances to inferred slot values. |

| EditBench | A comprehensive diagnostic and evaluation dataset for text-guided image editing. |

| Few-shot Regional Machine Translation | FRMT is a few-shot evaluation dataset containing en-pt and en-zh bitexts translated from Wikipedia, in two regional varieties for each non-English language (pt-BR and pt-PT; zh-CN and zh-TW). |

| Google Patent Phrase Similarity | A human-rated contextual phrase-to-phrase matching dataset focused on technical terms from patents. |

| Hinglish-TOP | Hinglish-TOP is the largest code-switched semantic parsing dataset with 10k entries annotated by humans, and 170K generated utterances using the CST5 augmentation technique introduced in the paper. |

| ImPaKT | A dataset that contains semantic parsing annotations for 2,489 sentences from shopping web pages in the C4 corpus, corresponding to annotations of 3,719 expressed implication relationships and 6,117 typed and summarized attributes. |

| InFormal | A formality style transfer dataset for four Indic Languages, made up of a pair of sentences and a corresponding gold label identifying the more formal and semantic similarity. |

| MAVERICS | A suite of test-only visual question answering datasets, created from Visual Question Answering image captions with question answering validation and manual verification. |

| MetaPose | A dataset with 3D human poses and camera estimates predicted by the MetaPose model for a subset of the public Human36M dataset with input files necessary to reproduce these results from scratch. |

| MGnify proteins | A 2.4B-sequence protein database with annotations. |

| MiQA: Metaphorical Inference Questions and Answers | MiQA assesses the capability of language models to reason with conventional metaphors. It combines the previously isolated topics of metaphor detection and commonsense reasoning into a single task that requires a model to make inferences by selecting between the literal and metaphorical register. |

| MT-Opt | A dataset of task episodes collected across a fleet of real robots, following the RLDS format to represent steps and episodes. |

| MultiBERTs Predictions on Winogender | Predictions of BERT on Winogender before and after several different interventions. |

| Natural Language Understanding Uncertainty Evaluation | NaLUE is a relabelled and aggregated version of three large NLU corpuses CLINC150, Banks77 and HWU64. It contains 50k utterances spanning 18 verticals, 77 domains, and ~260 intents. |

| NewsStories | A collection of url links to publicly available news articles with their associated images and videos. |

| Open Images V7 | Open Images V7 expands the Open Images dataset with new point-level label annotations, which provide localization information for 5.8k classes, and a new all-in-one visualization tool for better data exploration. |

| Pfam-NUniProt2 | A set of 6.8 million new protein sequence annotations. |

| Re-contextualizing Fairness in NLP for India | A dataset of region and religion-based societal stereotypes in India, with a list of identity terms and templates for reproducing the results from the “Re-contextualizing Fairness in NLP” paper. |

| Scanned Objects | A dataset with 1,000 common household objects that have been 3D scanned for use in robotic simulation and synthetic perception research. |

| Specialized Rater Pools | This dataset comes from a study designed to understand whether annotators with different self-described identities interpret toxicity differently. It contains the unaggregated toxicity annotations of 25,500 comments from pools of raters who self-identify as African American, LGBTQ, or neither. |

| UGIF | A multi-lingual, multi-modal UI grounded dataset for step-by-step task completion on the smartphone. |

| UniProt Protein Names | Data release of ~49M protein name annotations predicted from their amino acid sequence. |

| upwelling irradiance from GOES-16 | Climate researchers can use the 4 years of outgoing longwave radiation and reflected shortwave radiation data to analyze important climate forcers, such as aircraft condensation trails. |

| UserLibri | The UserLibri dataset reorganizes the existing popular LibriSpeech dataset into individual “user” datasets consisting of paired audio-transcript examples and domain-matching text-only data for each user. This dataset can be used for research in speech personalization or other language processing fields. |

| VideoCC | A dataset containing (video-URL, caption) pairs for training video-text machine learning models. |

| Wiki-conciseness | A manually curated evaluation set in English for concise rewrites of 2,000 Wikipedia sentences. |

| Wikipedia Translated Clusters | Introductions to English Wikipedia articles and their parallel versions in 10 other languages, with machine translations to English. Also includes synthetic corruptions to the English versions, to be identified with NLI models. |

| Workload Traces 2022 | A dataset with traces that aim to help system designers better understand warehouse-scale computing workloads and develop new solutions for front-end and data-access bottlenecks. |

| Tool | Description |

| Differential Privacy Open Source Library | An open-source library to enable developers to use analytic techniques based on DP. |

| Mood Board Search | The result of collaborative work with artists, photographers, and image researchers to demonstrate how ML can enable people to visually explore subjective concepts in image datasets. |

| Project Relate | An Android beta app that uses ML to help people with non-standard speech make their voices heard. |

| TensorStore | TensorStore is an open-source C++ and Python library designed for storage and manipulation of n-dimensional data, which can address key engineering challenges in scientific computing through better management and processing of large datasets. |

| The Data Cards Playbook | A Toolkit for Transparency in Dataset Documentation. |

Conclusion

Research is an amplifier, an accelerator, and an enabler — and we are grateful to partner with so many incredible people to harness it for the good of humanity. Even when investing in research that advances our products and engineering, we recognize that, ultimately, this fuels what we can offer our users. We welcome more partners to engage with us and maximize the benefits of AI for the world.

Acknowledgements

Thank you to our many research partners across the globe, including academics, universities, NGOs, and research organizations, for continuing to engage and work with Google on exciting research efforts. There are many teams within GoogIe who make this work possible, including Google’s research teams and community, research partnerships, education, and policy teams. Finally, I would especially like to thank those who provided helpful feedback in the development of this post, including Sepi Hejazi Moghadam, Jill Alvidrez, Melanie Saldaña, Ashwani Sharma, Adriana Budura Skobeltsyn, Aimin Zhu, Michelle Hurtado, Salil Banerjee and Esmeralda Cardenas.

Google Research, 2022 & beyond

This was the ninth and final blog post in the “Google Research, 2022 & Beyond” series. Other posts in this series are listed in the table below: