Google’s AI for Social Good team consists of researchers, engineers, volunteers, and others with a shared focus on positive social impact. Our mission is to demonstrate AI’s societal benefit by enabling real-world value, with projects spanning work in public health, accessibility, crisis response, climate and energy, and nature and society. We believe that the best way to drive positive change in underserved communities is by partnering with change-makers and the organizations they serve.

In this blog post we discuss work done by Project Euphonia, a team within AI for Social Good, that aims to improve automatic speech recognition (ASR) for people with disordered speech. For people with typical speech, an ASR model’s word error rate (WER) can be less than 10%. But for people with disordered speech patterns, such as stuttering, dysarthria and apraxia, the WER could reach 50% or even 90% depending on the etiology and severity. To help address this problem, we worked with more than 1,000 participants to collect over 1,000 hours of disordered speech samples and used the data to show that ASR personalization is a viable avenue for bridging the performance gap for users with disordered speech. We’ve shown that personalization can be successful with as little as 3-4 minutes of training speech using layer freezing techniques.

This work led to the development of Project Relate for anyone with atypical speech who could benefit from a personalized speech model. Built in partnership with Google’s Speech team, Project Relate enables people who find it hard to be understood by other people and technology to train their own models. People can use these personalized models to communicate more effectively and gain more independence. To make ASR more accessible and usable, we describe how we fine-tuned Google’s Universal Speech Model (USM) to better understand disordered speech out of the box, without personalization, for use with digital assistant technologies, dictation apps, and in conversations.

Addressing the challenges

Working closely with Project Relate users, it became clear that personalized models can be very useful, but for many users, recording dozens or hundreds of examples can be challenging. In addition, the personalized models did not always perform well in freeform conversation.

To address these challenges, Euphonia’s research efforts have been focusing on speaker independent ASR (SI-ASR) to make models work better out of the box for people with disordered speech so that no additional training is necessary.

Prompted Speech dataset for SI-ASR

The first step in building a robust SI-ASR model was to create representative dataset splits. We created the Prompted Speech dataset by splitting the Euphonia corpus into train, validation and test portions, while ensuring that each split spanned a range of speech impairment severity and underlying etiology and that no speakers or phrases appeared in multiple splits. The training portion consists of over 950k speech utterances from over 1,000 speakers with disordered speech. The test set contains around 5,700 utterances from over 350 speakers. Speech-language pathologists manually reviewed all of the utterances in the test set for transcription accuracy and audio quality.

Real Conversation test set

Unprompted or conversational speech differs from prompted speech in several ways. In conversation, people speak faster and enunciate less. They repeat words, repair misspoken words, and use a more expansive vocabulary that is specific and personal to themselves and their community. To improve a model for this use case, we created the Real Conversation test set to benchmark performance.

The Real Conversation test set was created with the help of trusted testers who recorded themselves speaking during conversations. The audio was reviewed, any personally identifiable information (PII) was removed, and then that data was transcribed by speech-language pathologists. The Real Conversation test set contains over 1,500 utterances from 29 speakers.

Adapting USM to disordered speech

We then tuned USM on the training split of the Euphonia Prompted Speech set to improve its performance on disordered speech. Instead of fine-tuning the full model, our tuning was based on residual adapters, a parameter-efficient tuning approach that adds tunable bottleneck layers as residuals between the transformer layers. Only these layers are tuned, while the rest of the model weights are untouched. We have previously shown that this approach works very well to adapt ASR models to disordered speech. Residual adapters were only added to the encoder layers, and the bottleneck dimension was set to 64.

Results

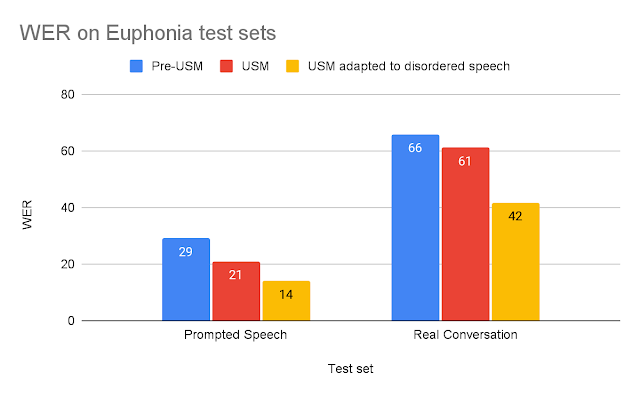

To evaluate the adapted USM, we compared it to older ASR models using the two test sets described above. For each test, we compare adapted USM to the pre-USM model best suited to that task: (1) For short prompted speech, we compare to Google’s production ASR model optimized for short form ASR; (2) for longer Real Conversation speech, we compare to a model trained for long form ASR. USM improvements over pre-USM models can be explained by USM’s relative size increase, 120M to 2B parameters, and other improvements discussed in the USM blog post.

|

| Model word error rates (WER) for each test set (lower is better). |

We see that the USM adapted with disordered speech significantly outperforms the other models. The adapted USM’s WER on Real Conversation is 37% better than the pre-USM model, and on the Prompted Speech test set, the adapted USM performs 53% better.

These findings suggest that the adapted USM is significantly more usable for an end user with disordered speech. We can demonstrate this improvement by looking at transcripts of Real Conversation test set recordings from a trusted tester of Euphonia and Project Relate (see below).

| Audio1 | Ground Truth | Pre-USM ASR | Adapted USM | |||

| I now have an Xbox adaptive controller on my lap. | i now have a lot and that consultant on my mouth | i now had an xbox adapter controller on my lamp. | ||||

| I’ve been talking for quite a while now. Let’s see. | quite a while now | i’ve been talking for quite a while now. |

| Example audio and transcriptions of a trusted tester’s speech from the Real Conversation test set. |

A comparison of the Pre-USM and adapted USM transcripts revealed some key advantages:

- The first example shows that Adapted USM is better at recognizing disordered speech patterns. The baseline misses key words like “XBox” and “controller” that are important for a listener to understand what they are trying to say.

- The second example is a good example of how deletions are a primary issue with ASR models that are not trained with disordered speech. Though the baseline model did transcribe a portion correctly, a large part of the utterance was not transcribed, losing the speaker’s intended message.

Conclusion

We believe that this work is an important step towards making speech recognition more accessible to people with disordered speech. We are continuing to work on improving the performance of our models. With the rapid advancements in ASR, we aim to ensure people with disordered speech benefit as well.

Acknowledgements

Key contributors to this project include Fadi Biadsy, Michael Brenner, Julie Cattiau, Richard Cave, Amy Chung-Yu Chou, Dotan Emanuel, Jordan Green, Rus Heywood, Pan-Pan Jiang, Anton Kast, Marilyn Ladewig, Bob MacDonald, Philip Nelson, Katie Seaver, Joel Shor, Jimmy Tobin, Katrin Tomanek, and Subhashini Venugopalan. We gratefully acknowledge the support Project Euphonia received from members of the USM research team including Yu Zhang, Wei Han, Nanxin Chen, and many others. Most importantly, we wanted to say a huge thank you to the 2,200+ participants who recorded speech samples and the many advocacy groups who helped us connect with these participants.

1Audio volume has been adjusted for ease of listening, but the original files would be more consistent with those used in training and would have pauses, silences, variable volume, etc. ↩